About

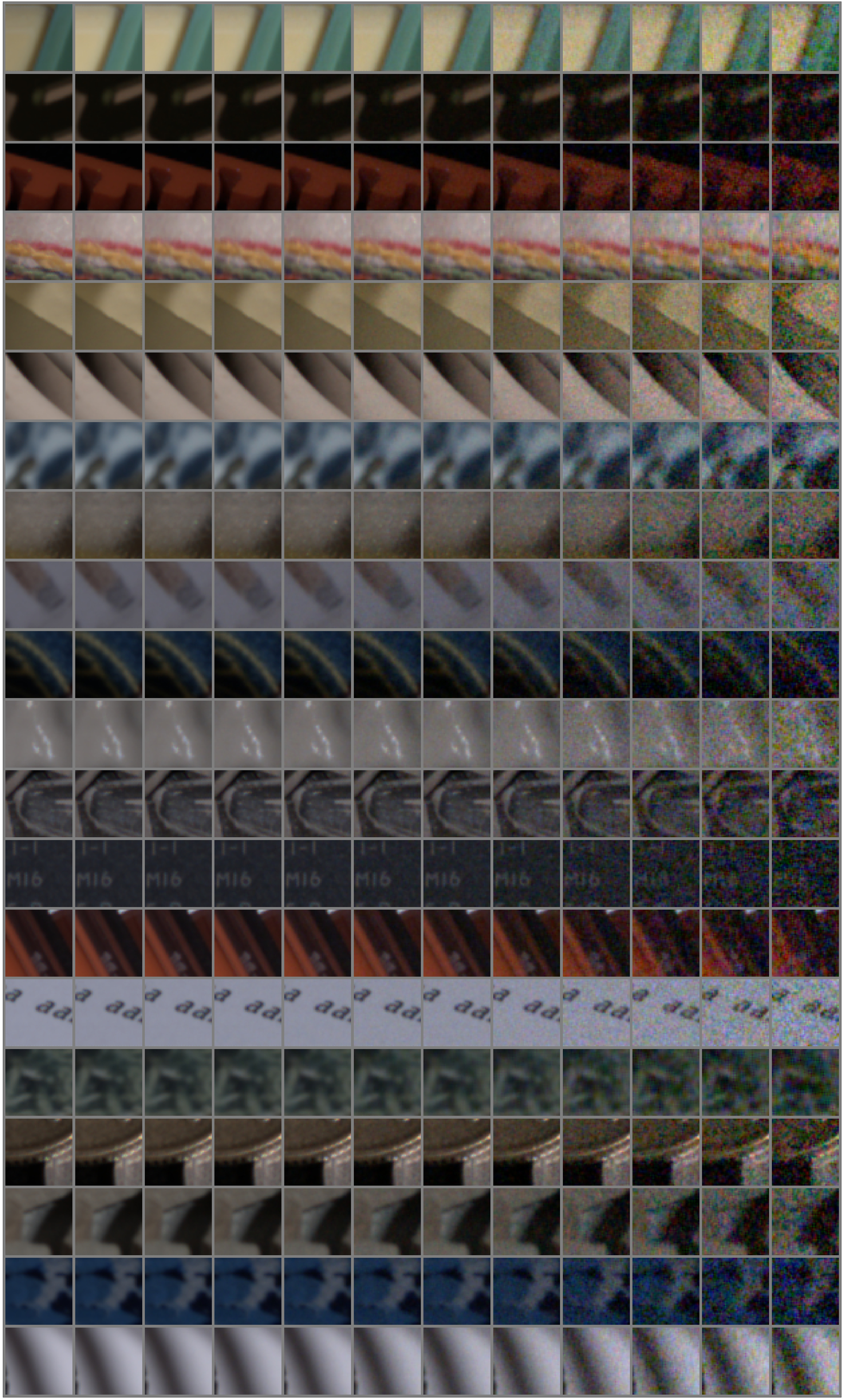

One of the weak points of most of denoising algoritms (deep learning based ones) is the training data. Due to no or very limited amount of groundtruth data available, these algorithms are often evaluated using synthetic noise models such as Additive Zero-Mean Gaussian noise. The downside of this approach is that these simple model do not represent noise present in natural imagery. For evaluation of denoising algorithms’ performance in poor light conditions, we need either representative models or real noisy images paired with those we can consider as groundtruth.

- Contact

- Alexandra Malyugina [main contact], N. Anantrasirichai

Method

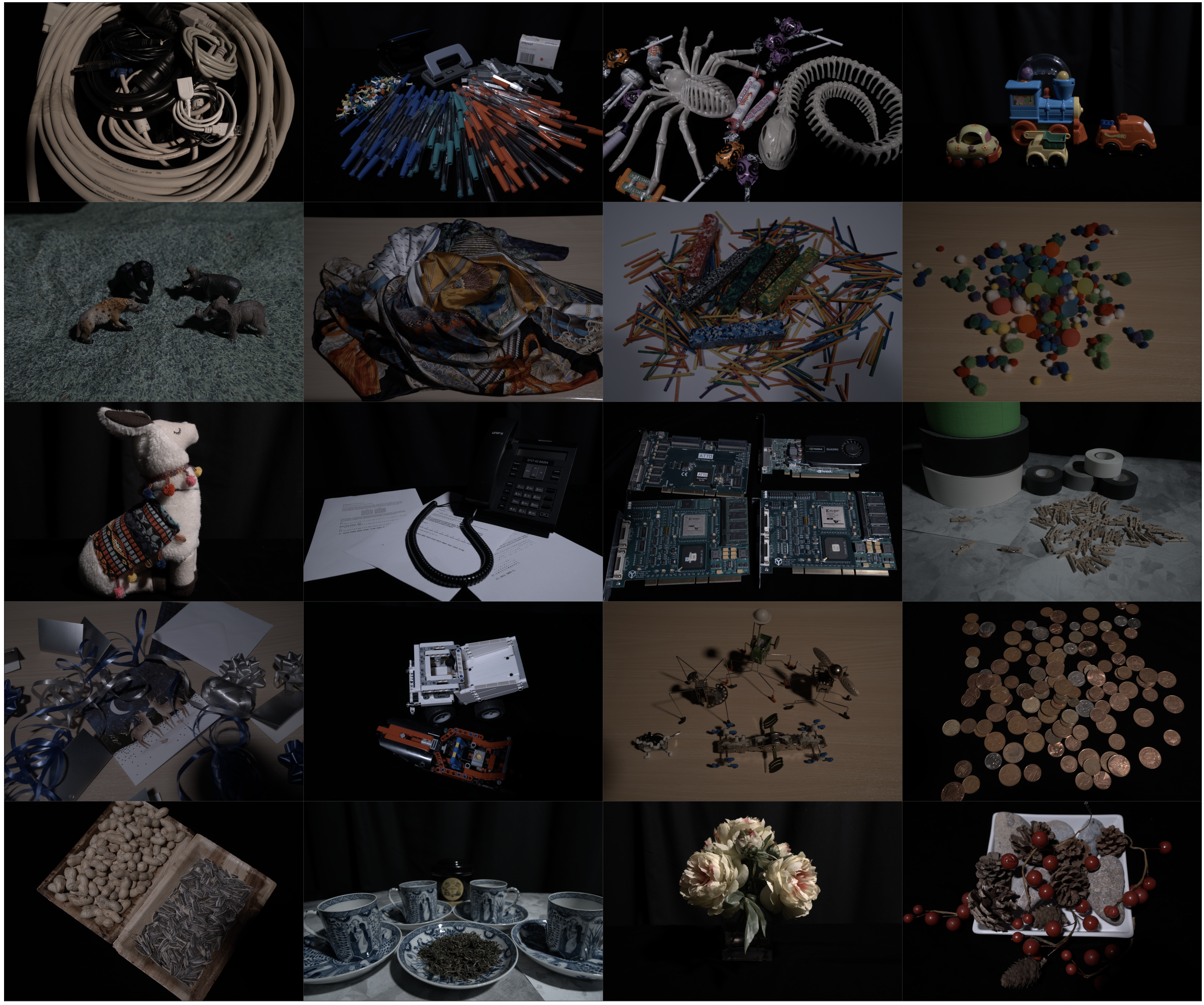

We collected the dataset of 40000 images in total, which constitute 25 scenes captured with 2 cameras (Nikon D7000 and Sony A7SII). For each scene and camera type 30 shots were taken with ISO settings ranging from 100 to 25600 (Nikon D7000) 100 to 409600 (Sony A7SII). The images were taken under stable light conditions in the studio (non- flickering LED lights with intensity 2-4 % and colour temperature 2700K-6500K) with the fixed aperture (6.3 and 6.7) and varying shutter speed.

All images are captured using tripods and remote camera control library libgphoto2. The scenes contain a variety of content and greatly vary in textures and colours (see figure below). A wide range of ISO settings results in different noise levels and statistics.

How to download

The dataset can be downloaded on IEEE Dataport.

full_aligneddirectory contains the dataset with full available range of scenes and ISO levels with 30 shots per scene/camera/ISO level. Each folder containing all images per one scene is put in a separate .zip archive.

reduced_aligneddirectory contains the short version of the dataset with full available range of, half of the ISO levels range and half of the number of shots (15). Each folder containing all images per one scene is put in a separate .zip archive.